Microsoft has deployed its first batch of custom AI chips in its data centers, with plans for a broader rollout in the coming months. The new chip, named Maia 200, is designed as an AI inference powerhouse, optimized for the compute-intensive task of running AI models in production. Microsoft claims the Maia 200 delivers impressive processing speeds, outperforming Amazon’s latest Trainium chips and Google’s latest Tensor Processing Units.

This move by Microsoft is part of a larger trend among cloud giants developing their own AI chip designs. A key driver is the ongoing difficulty and high expense of securing the latest hardware from Nvidia, a supply crunch that continues without signs of easing.

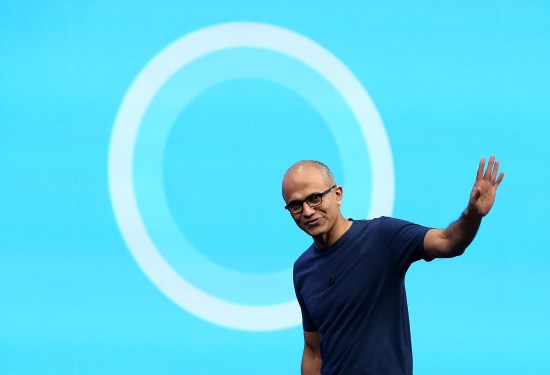

Despite introducing its own state-of-the-art chip, Microsoft CEO Satya Nadella emphasized the company will continue to purchase chips from other manufacturers. He highlighted Microsoft’s strong partnerships with Nvidia and AMD, noting that innovation is happening across the industry. Nadella stated that the ability to vertically integrate does not mean the company will rely solely on its own technology.

The Maia 200 chip will be used first by Microsoft’s internal Superintelligence team, the group building the company’s own frontier AI models. This team is led by Mustafa Suleyman, the former Google DeepMind co-founder. Microsoft’s development of its own models is seen as a step toward potentially reducing its reliance on external AI makers like OpenAI and Anthropic.

Additionally, the Maia 200 will support OpenAI’s models running on Microsoft’s Azure cloud platform. However, access to the most advanced AI hardware remains a widespread challenge for both paying customers and internal teams. In a public post, Suleyman expressed clear enthusiasm that his team received priority access to the new chips, calling it a big day for their frontier model development.