Google DeepMind is opening up access to Project Genie, its AI tool for creating interactive game worlds from text prompts or images. Starting Thursday, Google AI Ultra subscribers in the U.S. can experiment with this research prototype. The tool is powered by a combination of Google’s latest world model Genie 3, its image-generation model Nano Banana Pro, and Gemini.

This move comes five months after the research preview of Genie 3. It is part of a broader push to gather user feedback and training data as DeepMind races to develop more capable world models. World models are AI systems that generate an internal representation of an environment and can be used to predict future outcomes and plan actions. Many AI leaders, including those at DeepMind, believe world models are a crucial step toward achieving artificial general intelligence, or AGI. In the nearer term, labs like DeepMind envision a plan that starts with video games and other entertainment before branching out into training embodied agents, like robots, in simulation.

DeepMind’s release of Project Genie arrives as competition in world models intensifies. Fei-Fei Li’s World Labs released its first commercial product called Marble late last year. Runway, the AI video-generation startup, has also launched a world model recently. Additionally, former Meta chief scientist Yann LeCun’s startup AMI Labs will focus on developing world models.

DeepMind researchers were upfront about the tool’s experimental nature. It can be inconsistent, sometimes impressively generating playable worlds and other times producing baffling results. A research director at DeepMind expressed excitement about having more people access the tool and provide feedback.

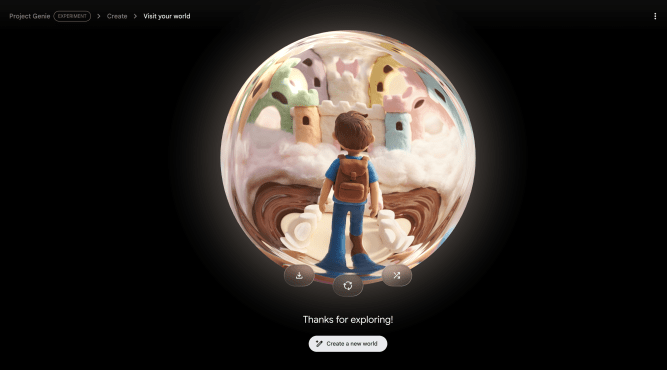

To use Project Genie, you start with a “world sketch” by providing text prompts for both the environment and a main character. Nano Banana Pro creates an image based on the prompts, which you can theoretically modify before Genie uses it to build an interactive world. The modification feature mostly worked, though the model occasionally stumbled, such as giving purple hair when green was requested. You can also use real-life photos as a baseline, though results were hit or miss.

Once satisfied with the image, it takes a few seconds for Project Genie to create an explorable world. You can remix existing worlds into new interpretations, explore curated worlds in a gallery, or use a randomizer tool for inspiration. You can then download videos of the world you explored. Currently, DeepMind is only granting 60 seconds of world generation and navigation due to budget and compute constraints. Using the model requires dedicated computing power, which limits how much DeepMind can provide to users. The team stated that extending beyond 60 seconds would offer diminishing returns for testing, as the environments’ dynamism is currently limited.

During testing, the model’s safety guardrails were active. It could not generate nudity or worlds resembling Disney or other copyrighted material. The model excelled at creating worlds based on artistic prompts like watercolors, anime, or classic cartoon aesthetics. However, it struggled with photorealistic or cinematic worlds, often producing results that looked more like video games than real settings. It also had mixed results when given real photos to work with, sometimes rearranging elements and creating a sterile, digital look.

Interactivity is an area DeepMind is working to improve. Characters sometimes walked through walls or solid objects. Navigation using keyboard controls could be non-responsive or send the character in the wrong direction, making movement a challenging experience. The team acknowledged these shortcomings and emphasized that Project Genie is an experimental prototype. They hope to enhance realism and improve interaction capabilities in the future, including giving users more control over actions and environments. The researchers view it not as a finished product for daily use, but as a glimpse of something unique and interesting that cannot be done another way.