Understanding why a deep learning model behaves the way it does is a major challenge. Whether it’s the odd political outputs from xAI’s Grok, ChatGPT’s tendency toward sycophancy, or the common issue of hallucinations, figuring out what happens inside a neural network with billions of parameters is incredibly difficult.

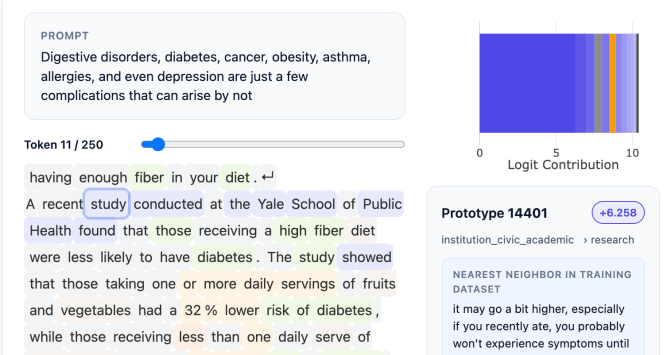

A San Francisco startup called Guide Labs, founded by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, is now offering a potential solution. The company has open-sourced an 8 billion parameter large language model named Steerling-8B. This model uses a new architecture designed to make its actions easily interpretable. Every token it produces can be traced back to its origins in the model’s training data.

This traceability can be used for simple tasks, like checking the reference materials for facts the model cites, or for more complex analysis, like understanding the model’s concept of humor or gender. As CEO Julius Adebayo explained, trying to find and control how a model encodes a concept like gender across billions of parameters with current models is a fragile process. He calls interpretability one of the holy grail questions in the field.

Adebayo began this research during his PhD at MIT, co-authoring a widely cited 2020 paper that showed existing methods for understanding deep learning models were unreliable. That work led to a new approach for building LLMs. Developers insert a concept layer into the model that organizes data into traceable categories. While this requires more upfront data annotation, the team used other AI models to assist, allowing them to train Steerling-8B as their largest proof of concept.

Adebayo contrasts their method with typical interpretability work, which he likens to doing neuroscience on a model. Instead, Guide Labs engineers the model from the ground up so that extensive reverse-engineering isn’t necessary.

A potential concern with this structured approach is that it might stifle the emergent behaviors that make LLMs so capable, such as generalizing about topics they weren’t explicitly trained on. Adebayo states that his company’s model still exhibits this property. His team tracks what they call “discovered concepts,” like quantum computing, which the model identified on its own.

Adebayo argues this interpretable architecture will become essential. For consumer-facing LLMs, it could allow builders to block the use of copyrighted materials or better control outputs concerning violence or drug abuse. Regulated industries, like finance, will require more controllable models where decisions can be based on financial records but not on race. Interpretability is also crucial in scientific fields; for instance, in protein folding, scientists need to understand why a model suggests certain successful combinations.

According to Adebayo, this model demonstrates that training interpretable models is no longer just a science project but an engineering problem. He believes there is no reason this kind of model cannot match the performance of frontier models, which have many more parameters.

Guide Labs reports that Steerling-8B achieves 90% of the capability of existing models while using less training data, thanks to its novel architecture. The company, which emerged from Y Combinator and raised a $9 million seed round from Initialized Capital in November 2024, plans to build a larger model and begin offering API and agentic access to users.

Adebayo concludes that the current way of training models is primitive, and democratizing inherent interpretability will be a long-term good for humanity. As we develop super-intelligent models, you do not want something making mysterious decisions on your behalf.