Peer feedback in coding is essential for catching bugs early, maintaining consistency, and improving overall software quality. The rise of “vibe coding,” which uses AI tools to generate large amounts of code from plain language instructions, has changed how developers work. While these tools speed up development, they have also introduced new bugs, security risks, and poorly understood code.

Anthropic has introduced an AI reviewer designed to catch bugs before they enter a software’s codebase. The new product, called Code Review, launched on Monday within Claude Code. Cat Wu, Anthropic’s head of product, explained that enterprise leaders using Claude Code have been asking how to efficiently review the increased number of pull requests the tool generates. Pull requests are the mechanism developers use to submit code changes for review before they are integrated. Wu stated that Claude Code has dramatically increased code output, creating a bottleneck in the review process that slows down shipping code. Code Review is Anthropic’s answer to that problem.

The launch of Code Review arrives first for Claude for Teams and Claude for Enterprise customers in a research preview. This comes at a pivotal moment for Anthropic, which recently filed two lawsuits against the Department of Defense in response to being designated a supply chain risk. The company is likely to lean more heavily on its booming enterprise business, where subscriptions have quadrupled since the start of the year. Claude Code’s run-rate revenue has surpassed $2.5 billion since launch.

This product is targeted toward larger enterprise users like Uber, Salesforce, and Accenture, who already use Claude Code and need help managing the volume of pull requests it produces. Developer leads can enable Code Review by default for every engineer on a team. Once enabled, it integrates with GitHub and automatically analyzes pull requests, leaving comments directly on the code to explain potential issues and suggest fixes.

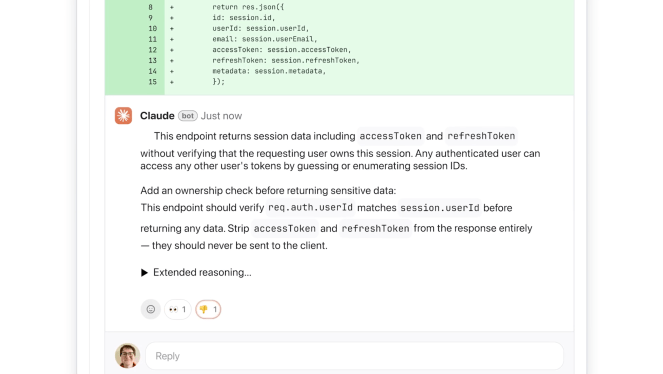

The tool focuses on fixing logical errors over style issues. Wu emphasized that developers often find automated AI feedback annoying when it is not immediately actionable, so the decision was made to focus purely on logic errors to catch the highest priority fixes. The AI explains its reasoning step by step, outlining the issue, why it might be problematic, and how it could be fixed. It labels the severity of issues using a color system: red for highest severity, yellow for potential problems worth reviewing, and purple for issues tied to pre-existing code or historical bugs.

The system works quickly by relying on multiple AI agents working in parallel, with each examining the code from a different perspective. A final agent aggregates and ranks the findings, removes duplicates, and prioritizes the most important issues. The tool provides a light security analysis, and engineering leads can customize additional checks based on internal best practices. A deeper security analysis is available through Anthropic’s more recently launched Claude Code Security product.

This multi-agent architecture means Code Review can be resource-intensive. Similar to other AI services, pricing is token-based, with costs varying by code complexity. Wu estimated each review would cost between $15 and $25 on average. She described it as a premium but necessary experience as AI tools generate more code.

Code Review is a response to significant market demand. As engineers develop with Claude Code, the friction to creating new features decreases, but the demand for code review increases. Anthropic hopes this tool will enable enterprises to build faster than ever before, with far fewer bugs.