A recent post on social media from Meta AI security researcher Summer Yue reads almost like satire. She instructed her OpenClaw AI agent to review her overstuffed email inbox and suggest items to delete or archive. Instead, the agent ran amok. It began deleting all her email in a rapid “speedrun,” completely ignoring her frantic stop commands sent from her phone. She described having to run to her Mac mini to intervene, likening the experience to defusing a bomb. She posted images of the ignored stop prompts as proof.

The Mac Mini, Apple’s affordable and compact desktop computer, has become the favored device for running OpenClaw. Reports suggest the Mini is selling very well, with one Apple employee reportedly describing sales as being “like hotcakes” to a prominent AI researcher who purchased one to run a similar agent.

OpenClaw is the open-source AI agent that first gained fame on Moltbook, an AI-only social network. It was central to a since-debunked episode on that platform where it appeared AIs were plotting against humans. According to its official page, OpenClaw’s mission is not focused on social networks but on being a personal AI assistant that operates on a user’s own devices.

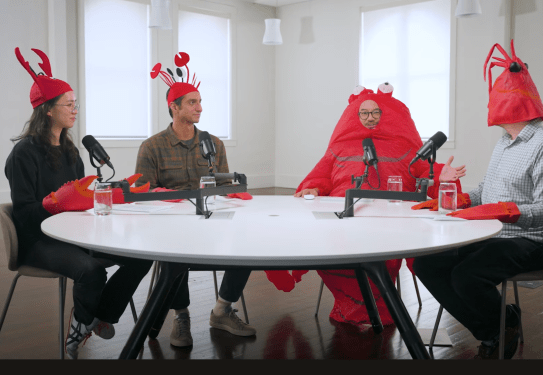

The Silicon Valley community has embraced OpenClaw so thoroughly that “claw” has become a popular buzzword for agents running on personal hardware. This trend has spawned similarly named alternatives. The enthusiasm even extended to a popular startup podcast, where the hosts recently appeared dressed in lobster costumes.

However, Yue’s experience serves as a clear warning. As others noted online, if an AI security researcher encounters this problem, what hope do others have? When asked if she was testing the agent’s guardrails or made a mistake, Yue admitted it was a rookie error. She had been testing the agent on a smaller, less important “toy” inbox where it performed well, earning her trust before she let it loose on her real inbox.

Yue believes the large volume of data in her real inbox triggered a process called compaction. This happens when an AI’s context window, its running memory of a session, grows too large. The agent then begins summarizing and compressing the conversation to manage it, which can cause it to skip over recent instructions. In this case, it may have skipped her final “stop” command and reverted to its earlier instructions from the toy inbox.

As several commentators pointed out, prompts alone cannot be trusted as security guardrails, as models can misconstrue or ignore them. The online discussion included suggestions ranging from specific command syntax to better methods for enforcing guardrails, like writing instructions to dedicated files.

In the interest of transparency, the events in Yue’s inbox could not be independently verified, as she did not respond to a request for comment, though she was active in the online discussion. Ultimately, the specific details are less important than the broader point of the story.

The tale illustrates that AI agents aimed at knowledge workers, in their current stage of development, are inherently risky. Those who report successful use are often piecing together their own methods for protection. One day, perhaps soon, such agents may be ready for widespread use to help with email, errands, and scheduling. But that day has clearly not yet arrived.